In our previous blog in the series, “5 DIY Cyber Security Skills Every IT Professional Needs to Master,” I discussed the basics of coding and programming that every cyber handyman needs to know. Today, I’ll cover the command line, a cyber handyman’s best friend.

I first thought about going the “Top X Most Useful Commands” route for this blog topic, but those blogs often seem arbitrarily contrived. Instead, I’ll focus on how the command line can be valuable for specific cyber handyman related tasks.

Personally, I love the command line. I can accomplish more with a keyboard and shortcuts instead of wasting time hunting for my cursor on the screen. Also, with a command line, I’m not confined to someone else’s options and rules.

While GUIs are absolutely useful, the command line provides some clear benefits over a GUI, such as:

- Flexibility: If you know a little coding and command line syntax, you can often script things faster than trying to write/find a GUI-based program to do the same thing.

- Direct Access: Whether you’re looking at a Windows or Linux box, it’s easier to gain remote access to systems via a command line. Other times, there may not be any GUI installed at all.

- Reliability: A GUI may change, but the command line generally stays the same from system to system, especially if you work in a Linux world.

For this blog, we’ll review some basic Doman Name Server (DNS) analysis on a bind9 DNS server. Our primary goal was to find out if any systems on our network have queried any known-malicious domain names. Our secondary goal was to learn how we can use the command line to simplify certain tasks we might normally handle through the GUI.

To begin, you need to know the pipe “|”. The pipe is your output-to-input best friend. The output of the command before the pipe is used as input for the command after the pipe. In a GUI, this would be the equivalent of saving a file from one program and importing it in another. However, in the command line it’s simple — as long as each side of the pipe knows how to work with the supplied data.

We’ll use this first set of commands to talk about a few different individual commands. Again, everywhere you see a pipe, the output of everything before that pipe is used as input to the command after the pipe:

This string includes the following commands:

- grep named /var/log/syslog: Searches for DNS-specific entries from the syslog file (using the string ‘named’)

- awk -F’ ‘ ‘{print $10}’: Breaks the results into fields separated by spaces and only the 10th field printed (which is the queried domain name)

- sort: sort results alphabetically

- uniq -c: Counts the unique results and prints the number of hits and the result

- sort -n: Sorts numerically

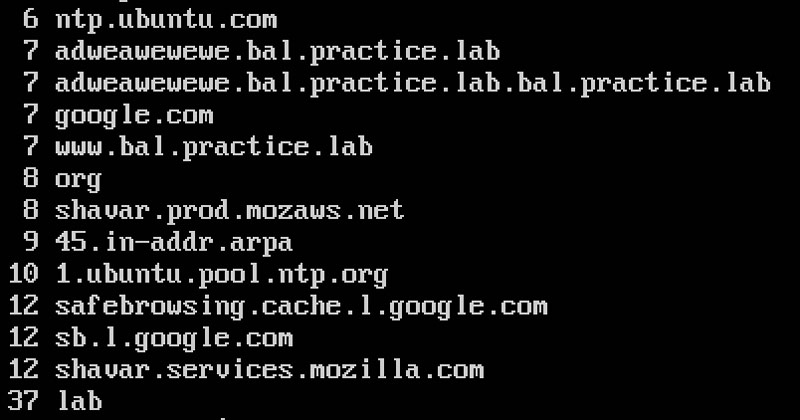

And here are the (partial) results:

With these results, I can tell which domains are being queried the most (or least!). Considering this is a lab environment, the number of queries are fewer than normal, but the principle is the same regardless.

Since I wanted domain names, I changed the command and added the redirect “>” operator. And since I wasn’t terribly concerned with the amount of hits I got at this point, I omitted the uniq –c and second sort command. Instead, I used the –u option of the sort command to get unique results. The results of this command are “redirected” to a file for further processing.

This one string includes the following commands:

- grep named /var/log/syslog: searches for DNS-specific entries from the syslog file (using the string ‘named’)

- awk –F’ ‘ ‘{print $10}’: breaks the results into fields separated by spaces and only the 10th field printed (which is the queried domain name)

- sort -u: sorts and provides unique results

- > myDNS.txt: send the results to this file

Now I gained a list of all the domain names that were queried through this server. If you know ssh, you can run a query on a remote server just as easily (using command line, of course). It’d be great if we could compare this list against a list of known malicious domain names. You can use wget to grab a file that has that info. The wget command, in its simplest form, downloads the given webpage. This location does actually host this list, but there are many like it to use so you can take your pick. Bonus task: use multiple lists and combine the unique entries into one file.

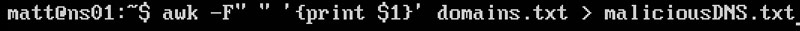

This file has extra info that I don’t need right now for the comparison, so I stripped it down using awk and a redirect to attain only the domain names.

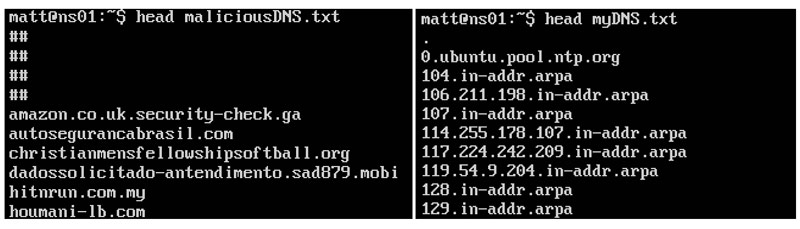

So now we have two files we’ll work with: myDNS.txt (our queries) and maliciousDNS.txt (bad domains). Here are the first ten lines from each:

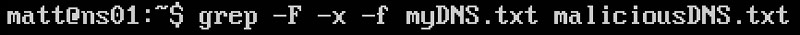

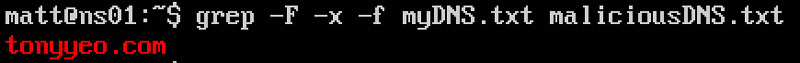

I used one of my favorites commands, grep, to compare the two files and find out what queries match a malicious domain.

Here’s a breakdown of the command:

- grep: does the search

- -F: search for strings by newline

- -f: file to get the strings from

- -x: only do whole-line matches

- txt maliciousDNS.txt: files to compare

After running the command, we got these matches:

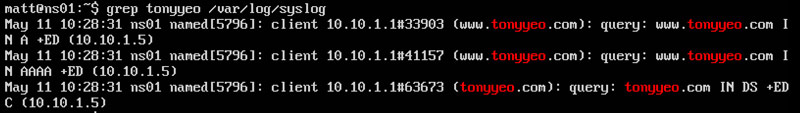

Sure enough, I magically got a hit. Apparently one of my systems attempted to resolve this malicious domain name. Let’s briefly review my DNS logs to find out which system queried for this domain name.

It looks like my system at 10.10.1.1 issued this request. At this point, you have a box to check for potential malicious activity. We simply used tools that are available on almost every Linux installation.

If you are a curious Windows user, you can run the same command on a Windows system. Also, if you know some basic programming or scripting, you can grow this into a script that will grab a malicious domain list at some periodicity, extract names from the malicious list and your DNS server, identify matches, then search your DNS logs to identify hosts querying for the malicious domains. Now that is what being a cyber handyperson is all about.

This process is just one example of why the command line is so useful. We didn’t need a GUI or expensive tools. We relied on basic DNS analysis with existing, free utilities. Could you do that in a GUI? If so, in the same amount of time? Not likely. This is the power of the command line and why it’s an irreplaceable skill in the realm of cyber security.

In our next blog, we’ll discuss the wonderful world of vulnerability scanning. In the meantime, you can catch up on our previous cyber handyman blogs and learn more about our training services.

Matt Kuznia is the strangest mix of things you can imagine. He’s part musician, black belt, snowboarder, computer geek, Baltimore Orioles fan, runner, and of course, DIY’er (cyber and otherwise).

Matt Kuznia is senior associate at Delta Risk LLC, a Chertoff Group Company that offers managed security services. You can follow him on Twitter for his latest #cyberhandyman tips and tricks. Read more Delta Risk LLC blogs here.