Oh, the places we go . . . with apps in the cloud.

A comprehensive, six-month study released by Proofpoint, in March 2019 reports that (oh, to our surprise), attackers are “leveraging legacy protocols and credential dumps to increase the speed and effectiveness of brute force account compromises at scale.” Yikes!! At SCALE!

Threat actors design threats aiming at platforms or services which will provide the greatest ROI for them. This means targeting attacks on systems which have the greatest number of users. As such, most of today’s attacks are targeted at Microsoft Office 365 (the world’s most widely used productivity suite) and G-Suite.

What happens when they get in?

According to the report, once hackers get into a “trusted” account, they’re launching an internal phishing attack or a business email compromise (BEC) attack, with the ultimate goal of extending their reach into the organization, so they can do bad things such as steal money or information (financial gain is a big motive in these types of attacks). Here’s a rough overview of how it works:

- Attacker > compromises a cloud account via a phishing campaign or stealing an employee’s credentials

- Once they have control of the account > they move laterally within the SaaS environment to compromise other user account (we’re talking multiple) — which is easier to do, since other employees trust the account they’re getting emails or attachments from > (Yikes again.)

- From there, the attacker can do many things, including launching man in the middle (MITM) attacks or setting “mail delegation” (i.e. when you grant access to your account to another person)

- Ultimate goal > typically to get money or information

If you want to know more about BEC attacks. Check out Trustwave’s blog, which does a great job of summarizing many of the approaches scammers take, including the use of business domains in emails that look similar to the email of an executive at a targeted company.

Staying on top of the cloud, and ahead of the bad guys

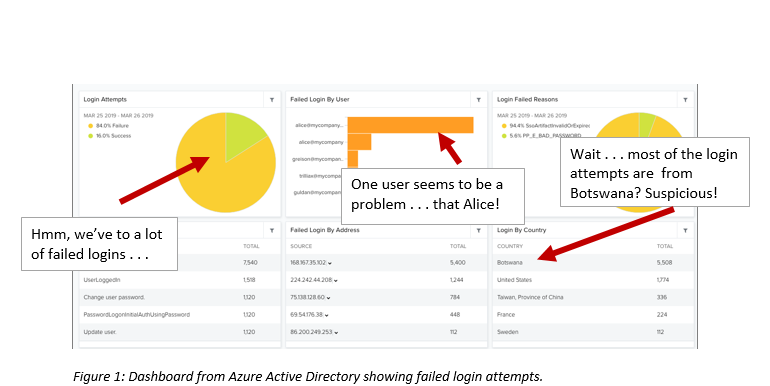

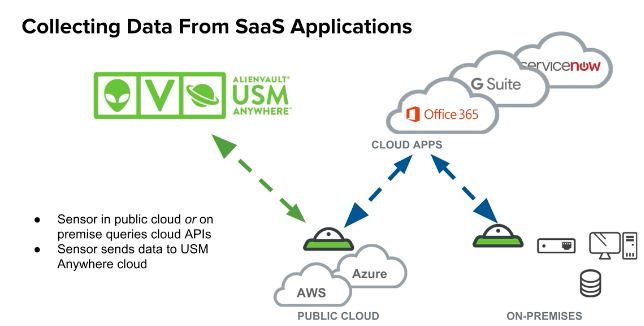

Among the “layered security” needed to protect your cloud assets, the AT&T USM Anywhere Office 365 App can be used to monitor cloud activity, including excessive failed logins, such as the ones mentioned in the Proofpoint report. (Note: it monitors other potentially malicious activity including file activity, etc. and it brings in the context of your on-prem environment as well).

The screen shot in figure 1, for example, shows a dashboard for Microsoft Azure Directory with information on login activity — with quite a few failed login attempts.

One particular user “Alice” is apparently the main culprit (or someone trying to pose as Alice). You can also see the login by country of origin, and in this case, we’re seeing a spike in logins originating from Botswana — hmmm . . . might be worth looking into considering that “Alice” works out of a US office.

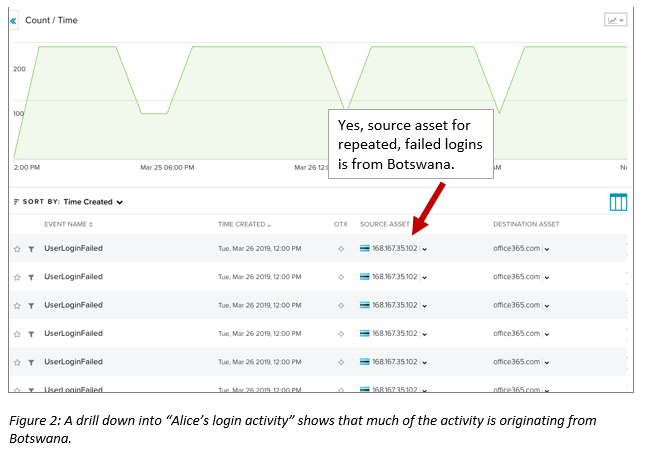

We can drill down to see even more detail on Alice’s login activity, noticing that the source asset for the login is definitely coming from Botswana — hmmmm, this doesn’t look good.

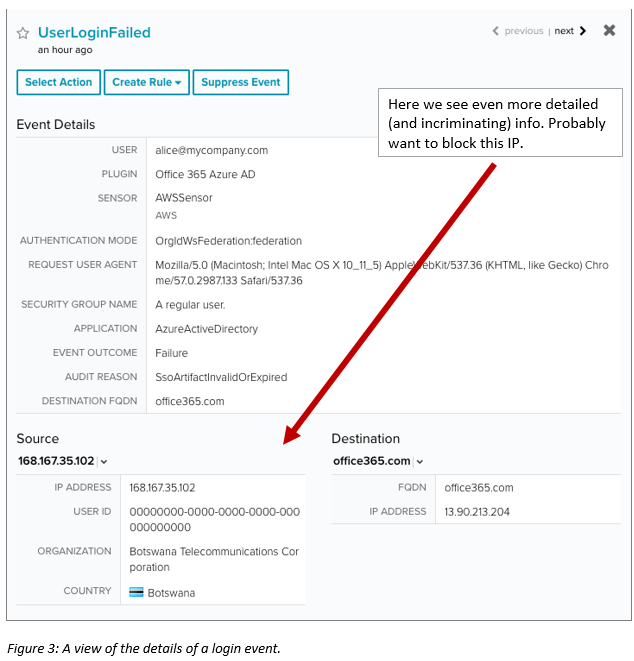

Drilling down even deeper, we see even more information (Figure 3). Unless Alice has made a recent trip to Africa, and is now trying to work while on vacation, this is definitely an indication that something is not right — probably a brute force authentication attack. From here, you have multiple options, such as going in and blocking that particular IP address.

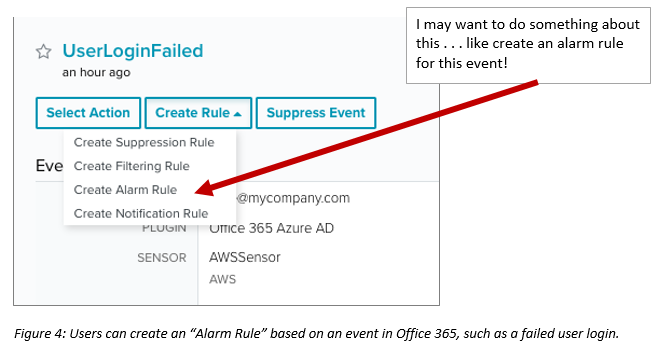

Also, within AlienVault USM Anywhere, you can also do such things as creating an “alarm rule” for the future.

Now let’s make this easier — AT&T Alien Labs creates Office 365 correlation rules for you.

The AT&T Alien Labs team regularly updates our threat intelligence and writes correlation rules to detect threats in the cloud, including in your Office 365 SaaS environment. (Caveat: it’s impossible to write correlation rules for every threat in the universe, but we have created hundreds, and are continuously updating those rules as well as adding more daily).

For Office 365, for example, we’ve created a correlation rule for, “Delivery & Attack | Brute Force Authentication | IMAP,” i.e. using automation to repeatedly test a username/password field by using random inputs such as dictionary terms or known username/password lists.

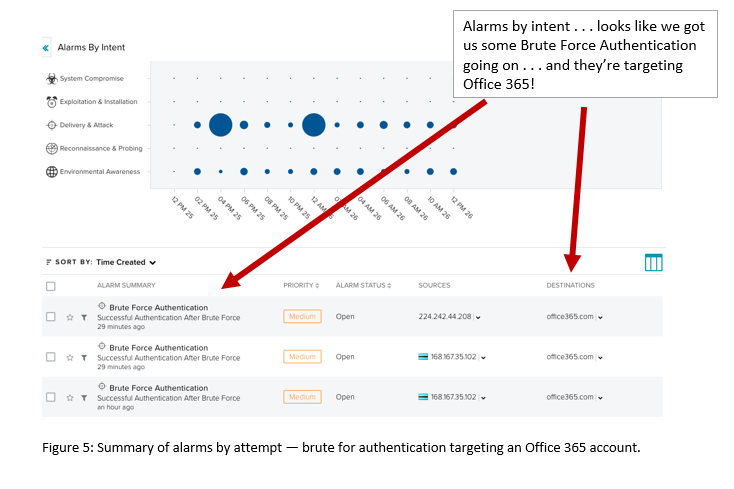

The screen shot in figure 5 shows a summary of alarms triggered for “successful authentication after brute force.” This also includes all the associated events (a number of failed user logins), priority of the alarms, username, source IP, and more.

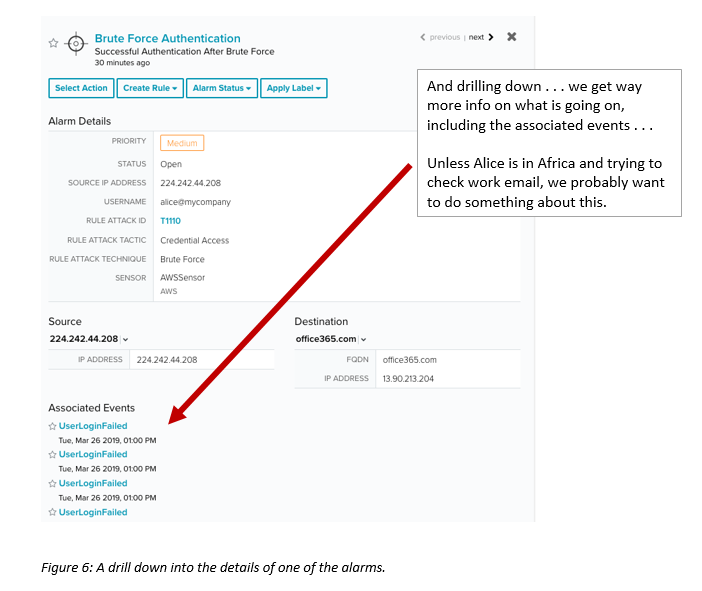

Users can drill down to get even more information, including associated events (i.e. we can see a number of user login attempts and failure). In addition, the alarm shows the MITRE ATT&CK “rule attack tactic” (credential access) and “rule attack technique” (brute force) — good for those of you who are using the ATT&CK framework as a best practice in your threat detection and response strategy. (Alien Labs has mapped all its correlation rules to the ATT&CK framework. You can read more about the MITRE ATT&CK dashboard here.)

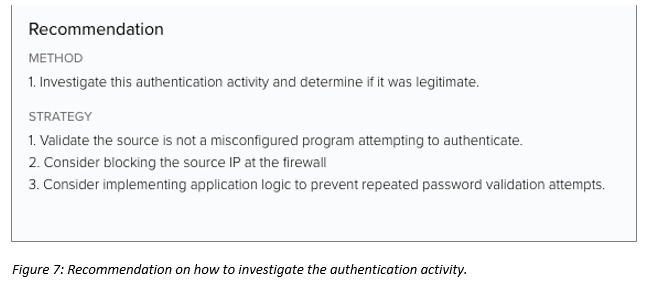

Alarms also include recommendations on what to do next, and how to do it (figure 7).

One final consideration in terms of protecting cloud accounts: they don’t live in a vacuum. If you’re like the bulk of organizations out there, you’re probably using multiple cloud services providers (IaaS, PaaS, and Saas) combined with your on-prem network. Gaining visibility of all these environments in one place — and the threats to them — is key to being able to stay ahead of things like brute force account compromise in the cloud.

Tawnya Lancaster is Lead Product Marketing Manager for AT&T Alien Labs, Threat Intelligence. Read more AT&T Cybersecurity blogs here.